Mean Squared Error (MSE) (L2 loss function, Euclidean loss) and Root Mean Squared Error (RMSE) - Python for Integrated Circuits - - An Online Book - |

||||||||

| Python for Integrated Circuits http://www.globalsino.com/ICs/ | ||||||||

| Chapter/Index: Introduction | A | B | C | D | E | F | G | H | I | J | K | L | M | N | O | P | Q | R | S | T | U | V | W | X | Y | Z | Appendix | ||||||||

================================================================================= The L2 loss function, also known as the Euclidean loss or mean squared error (MSE), is a commonly used loss function in machine learning. It measures the squared differences between predicted and actual values. In calculation of the MSE, we typically have two datasets: one with actual observed values and another with predicted or estimated values. The formula calculates the squared difference between each pair of corresponding values, averages these squared differences over all data points (from i=1 to n), and returns the MSE as a measure of the average squared error between the actual and predicted values. Minimizing the L2 loss helps in finding model parameters that make the predicted values close to the actual values. It is particularly common in regression problems. Mean squared error (MSE) is defined as the average of the squared differences between the estimated values and the true values, given by, The formula for L2 loss in Equation 4068a can be re-written as:

where,

hθ(xi) is the predicted output for the ith data point. The 1/2 factor is included to simplify the derivative of the loss during optimization. In linear regression, a goal is to minimize the least squares (OLS) or mean squared error (MSE) term, which measures the error between the predicted values and the actual values (i), below, Term 3910ia with L2 regularization (Ridge Regression) becomes the term below, Higher-order polynomial models, such as fifth-order polynomials, are capable of fitting training data very closely, which can result in a very low training set error as shown in Figure 4068a. However, they are prone to overfitting. Overfit models memorize the training data and may not generalize well to unseen data. The low training error may not reflect the model's performance on new, unseen data, and it could lead to poor generalization.

(a)

(b)

Figure 4068b shows a comparison between variances with and without regularization. The variance represents the mean squared error (MSE) between the model predictions and the actual data points. Both cases used random distributed data as dataset. The variances for both cases without and with regularization are 0.71 and 0.67, respectively. The variance without regularization (alpha=0) is slightly higher than the variance with regularization (alpha=1). However, in some cases, the difference is quite small so that the effect of regularization may vary depending on the dataset and the specific parameters.

(a)

(b) Figure 4068b. Comparison between variances: (a) without, and (b) with regularization. (code) Figure 4068c shows the normalization effect on Mean squared error (MSE).

(a)

(b)

The general trend of the test error decreases as the square root of the training set size (

On the other hand, Root Mean Squared Error (RMSE) is the square root of the mean of the squared errors:

============================================ Linear Regression: In linear regression, the true loss is typically defined as the mean squared error (MSE): Here, consists of the parameters and θ1, and is the MSE. Here's a Python program to plot the Mean Squared Error (MSE) as a function of a parameter for a simple linear regression example. Code: In this script:

============================================ To plot the loss (MSE) versus epoch for a machine learning training process, we typically need training data and a model to train. Here's a Python script using the popular deep learning library TensorFlow and its Keras API to demonstrate how to create such a plot for a simple linear regression model. Code: In this script:

===================== To visualize both training and validation losses during the training process, we can use TensorFlow/Keras's built-in support for validation data. Code: In this updated script:

This plot helps us visualize how the model performs on both the training and validation datasets throughout the training process, which is crucial for monitoring model generalization and potential overfitting. In the context of monitoring training and validation loss curves, a better performance is typically indicated by the following curve shapes:

Here's a breakdown of the curve shapes:

Therefore, in terms of curve shapes, a better performance is often associated with a training loss that decreases and a validation loss that converges and stabilizes. The point where the validation loss starts to increase slightly (after initially decreasing) is often the sweet spot for model selection, as it indicates the model's ability to generalize without overfitting to the training data. The dependence of training loss and validation loss on the number of epochs (Epoch) can be expressed using equations that describe how these losses change over the course of training. Typically, these equations are defined as follows: Training Loss (L_train):

Validation Loss (L_val):

The specific form of ftrain(Epoch) and fval(Epoch) depends on factors like the loss function, optimization algorithm, and the data being used. For example, in the case of Mean Squared Error (MSE) loss with stochastic gradient descent (SGD) optimization, ftrain(Epoch) and fval(Epoch) would involve the computation of the loss over the training and validation datasets at each epoch. These equations provide a general framework for understanding how training and validation losses change with the number of training epochs. The goal during training is to minimize the training loss while ensuring that the validation loss remains low without increasing significantly, indicating good generalization to unseen data. In well-performed training, it's not necessary for the training and validation losses to overlap with each other. [1,2] In fact, it's common for the training loss to be lower than the validation loss. Here's why:

In a well-performing model:

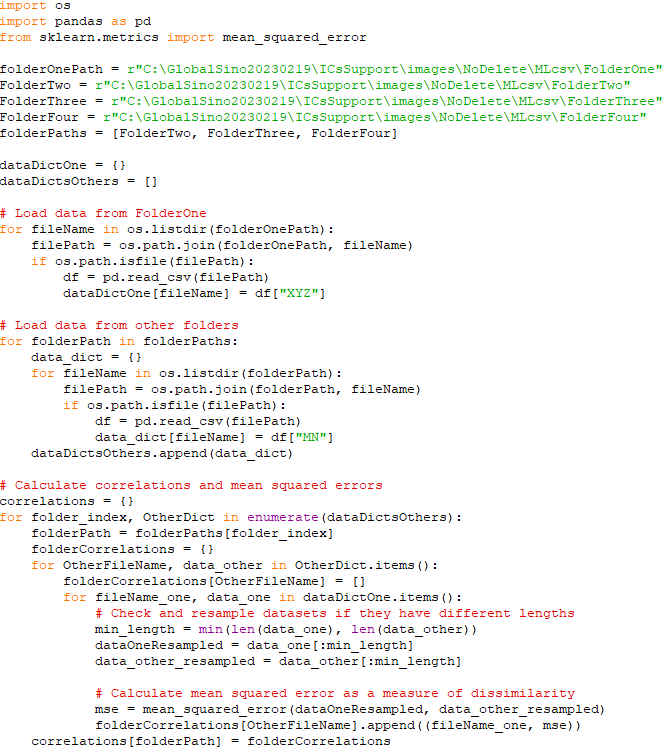

So, it's common for the validation loss to be slightly higher than the training loss, especially as training progresses. The key is that the gap between the two losses should not widen significantly, and the validation loss should not exhibit a sharp increase. A widening gap or a sharp increase in the validation loss would indicate potential overfitting, which is not desirable. Therefore, while it's not necessary for the training and validation losses to overlap, it's essential for the validation loss to remain reasonable and not show signs of deteriorating performance as training progresses. The primary goal is to have a well-generalizing model with a low validation loss. ============================================ Generalization Error: In this example, we'll generate synthetic data and fit a polynomial regression model to it. We'll then calculate and visualize the training error and test error (generalization error) as the polynomial degree increases, demonstrating the trade-off between underfitting and overfitting. Code: This script generates a plot showing how the training error and test error change as the polynomial degree increases. It illustrates the concept of generalization error by demonstrating the trade-off between underfitting (high training and test error) and overfitting (low training error but high test error). To find the right balance between underfitting and overfitting, we typically use techniques like cross-validation and validation datasets to assess model performance. These techniques help us select a model that generalizes well to unseen data and doesn't underfit or overfit. In addition to this simplified mathematical description, we can also use more complex metrics like learning curves, bias-variance trade-off analysis, or measures like the mean squared error (MSE) to assess the level of underfitting or overfitting in the models. ============================================ Correlations/similarity/dissimilarity between csv data using mean squared error (MSE):

Reads data from multiple folders, calculates the mean squared error as a measure of dissimilarity between the data in "FolderOne" and each other folder, finds the best match for each file in the other folders, and computes overall correlations for each folder based on the MSE values. It then prints the results, providing insights into the similarity/dissimilarity between the data in "FolderOne" and the other folders. Note that the script below is not a machine learning script. Code: ============================================

[1] Chiyuan Zhang, Samy Bengio, Moritz Hardt, Benjamin Recht, and Oriol Vinyals, Understanding Deep Learning Requires Rethinking Generalization, (2017).

|

||||||||

| ================================================================================= | ||||||||

|

|

||||||||

--------------------------------- [3910ia]

--------------------------------- [3910ia]  --------------------------------- [3910ib]

--------------------------------- [3910ib]

------------------------------------------- [4068ab]

------------------------------------------- [4068ab]